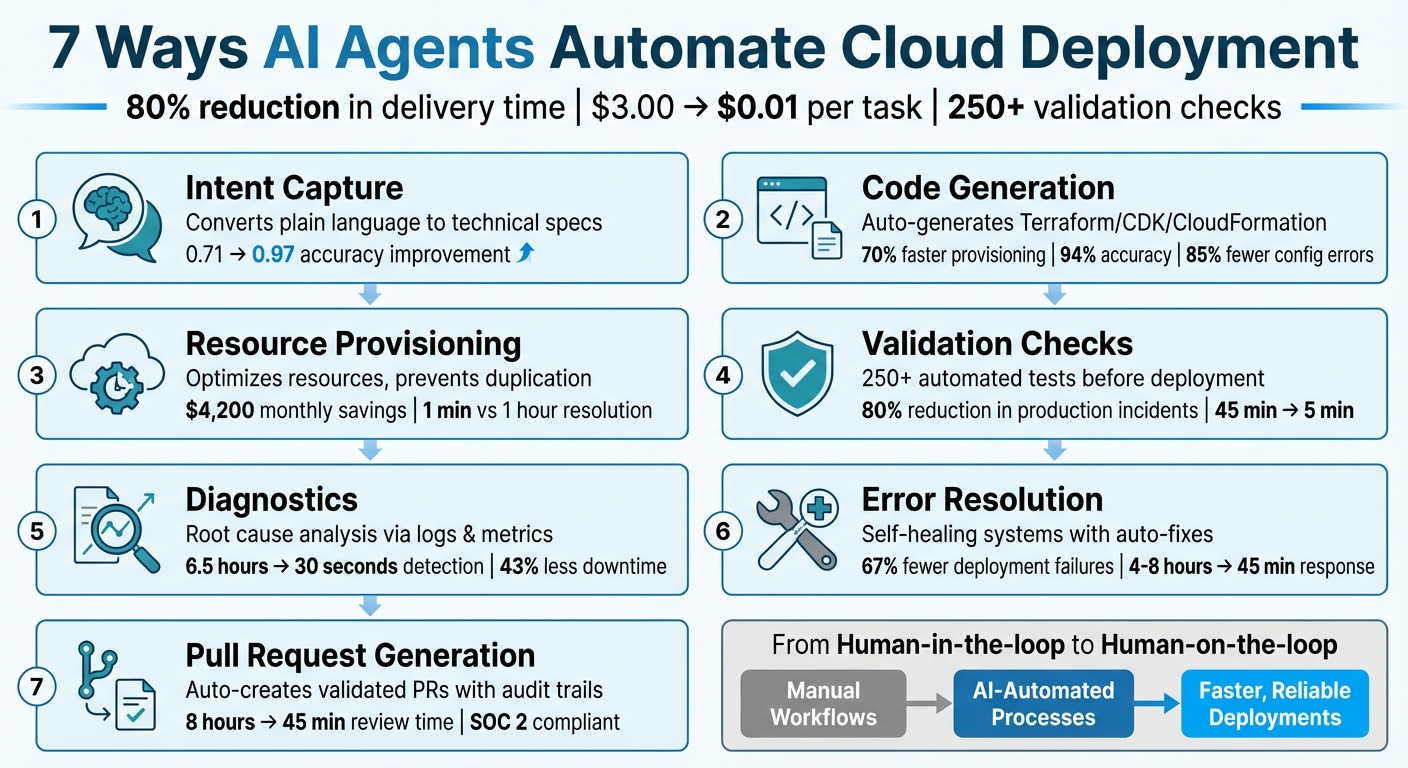

7 Ways AI Agents Automate Cloud Deployment

AI agents are transforming how cloud deployments are managed. These tools, powered by advanced language models, handle tasks like infrastructure code generation, resource provisioning, and error resolution autonomously. By shifting from manual workflows to automated processes, AI agents help reduce delivery times by 80% and cut support costs dramatically - from $3.00 to $0.01 per task. They also ensure reliability with features like continuous validation and self-healing systems.

Key capabilities include:

- Intent Capture: Converts plain language requests into technical specifications, reducing miscommunication and errors.

- Code Generation: Automatically generates infrastructure code (e.g., Terraform) aligned with existing setups.

- Resource Provisioning: Optimizes resources, avoiding duplication and saving costs.

- Validation Checks: Runs extensive tests to ensure deployments are secure and compliant.

- Diagnostics: Quickly identifies and resolves deployment issues using logs and metrics.

- Error Resolution: Fixes errors autonomously and redeploys updated configurations.

- Pull Request Generation: Creates validated pull requests with detailed audit trails for transparency.

These features allow DevOps teams to focus on oversight rather than manual execution, making cloud operations faster, more efficient, and reliable.

7 AI Agent Capabilities for Cloud Deployment Automation

AI-Powered DevOps in Cloud App Modernization: Automating Deployments, Monitoring, and Resilience

sbb-itb-3b7b063

How AI Changes Cloud Deployment

Traditional cloud deployments often hit a bottleneck. Engineers spend time manually deploying code, deciphering logs, and fixing issues before production can move forward. This process creates delays and inefficiencies that slow down delivery.

AI agents are changing the game by automating these tasks. Instead of relying on static scripts, these agents adapt and solve problems in real time. For instance, if a deployment fails, AI can analyze logs, identify the root cause, make necessary updates, and redeploy - all without human intervention. This approach allows teams to focus on overseeing the process rather than managing every detail themselves.

A great example of this shift is Kanu AI, which uses three specialized agents to streamline cloud operations. The Intent Agent starts by gathering requirements, asking clarifying questions, and learning team preferences to avoid miscommunication before any code is written. The DevOps Agent takes over by generating both application code and infrastructure-as-code (using tools like Terraform, CDK, or CloudFormation) in sync. It continuously refines these outputs based on real deployment feedback. Finally, the Q/A Agent performs over 250 validation checks on live systems, ensuring everything runs smoothly along actual data paths before human review is needed. Together, these agents create a seamless workflow.

The impact is clear. Companies using AI agents report an 80% reduction in delivery time, going from requirements to production significantly faster. Tasks that once required 45 minutes of a senior engineer's time are now completed in just 5 minutes. In one instance, an Optimizer Agent identified over-provisioned clusters and recommended Terraform updates, saving approximately $4,200 every month.

This evolution in cloud deployment doesn't just mean faster results - it means smarter, more efficient operations. AI is reshaping the way infrastructure is deployed and managed.

1. Intent Capture and Clarification

Automation Capabilities

AI agents are changing the way engineers handle repetitive tasks. Instead of writing boilerplate code or manually configuring YAML files, teams can now describe their needs in plain language. For example, an engineer might say, "I need a resilient, PCI-compliant message queue", and the agent will convert that into detailed infrastructure configurations automatically.

These agents don't just stop at initial requests. They engage in follow-up conversations to clarify tradeoffs, propose architectural options, and even learn team preferences for future tasks. This reduces the back-and-forth typically needed during deployments. Additionally, the agents ensure that all configurations align with the organization's "Golden Standards" and compliance policies, keeping everything on track with company requirements.

Deployment Efficiency

Once the intent is captured, AI agents make deployment faster and more seamless. Engineers can skip the tedious step of translating requirements into technical specs and move straight to having infrastructure deployed. This streamlined process significantly shortens the deployment timeline.

Error Reduction

Speeding up deployments doesn’t mean cutting corners. AI agents help reduce errors by addressing potential issues early in the process. By asking clarifying questions upfront, they prevent costly mistakes before deployment even begins. For example, the NSync agentic system demonstrated a leap in infrastructure-as-code reconciliation accuracy, improving from 0.71 to 0.97 pass@3 compared to traditional methods.

Unlike traditional automation tools that often stumble in unexpected scenarios, AI agents excel by using contextual reasoning. They adapt their approach based on the unique deployment environment, ensuring smoother operations. This proactive approach to error reduction highlights their growing role in fully automated cloud management.

2. Automated Infrastructure Code Generation

AI agents have taken infrastructure management to a whole new level by automating the process of turning requirements into infrastructure code.

Automation Capabilities

Imagine describing your needs in plain language - something as simple as, "Create a 3-node Kubernetes cluster with GPU support." AI agents can now transform such instructions into production-ready infrastructure code. They even cross-check .tfstate files, existing VPC setups, and compliance policies to avoid resource conflicts. Additionally, specialized agents can take your current cloud resources and convert them into version-controlled code.

These agents operate using a Cognitive Loop. They analyze the environment, pull relevant runbooks and state data, figure out the best approach, and execute the code. The result? A faster, smarter process. For instance, Stripe's implementation of such automation cut provisioning time by 70%.

Deployment Efficiency

The time savings are staggering. Tasks that once took days - like planning, research, and code generation - are now done in minutes. Tools like GitHub Copilot and Uber's context-aware platform have shown that productivity can jump by as much as 55%, while configuration errors drop by 85%. InfraLLM, a specialized model, has even hit 94% accuracy in generating configurations as of late 2025.

Error Reduction

Error detection is another major win. Systems like Cloudflare’s validation pipeline catch 99.5% of issues before deployment. These agents perform multi-step checks using commands such as terraform fmt and terraform validate, and they can even generate patches to fix problems.

"Managing Terraform code manually was time-consuming, error-prone, and required constant validation." - Anoop Kumar, Lead DevOps Consultant, Rackspace Technology

Integration with Existing Cloud Systems

AI agents seamlessly fit into existing DevOps workflows. Using the Model Context Protocol (MCP), they interact directly with live systems, running sandbox tests before opening pull requests. Policy-as-code guardrails, such as those implemented with Open Policy Agent, ensure that deployments are safe and compliant.

This level of integration not only simplifies the process but also makes it safer and more efficient.

3. Resource Provisioning and Analysis

Once the infrastructure code is ready, AI agents step in to handle resource provisioning and analyze existing environments. This process ensures deployments are efficient, cost-conscious, and conflict-free, continuing the streamlined workflow discussed earlier.

Automation Capabilities

AI agents excel at Resource Analysis, scanning your cloud setup to avoid duplicating resources, identifying unused assets, and spotting architectural inconsistencies. They can also translate high-level requirements into detailed configurations without needing you to specify every single parameter.

Another standout feature is predictive provisioning. By analyzing historical usage data, AI agents recommend optimal resource limits and instance sizes that balance performance with cost. This predictive approach complements automated code generation, ensuring resource configurations are optimized. For example, in early 2025, DigitalOcean introduced the Cloudways AI agent in a private preview with 250 customers. Acting as an autonomous SRE (Site Reliability Engineer), it resolved issues like disk usage spikes and DDoS attacks in just one minute - down from an average of one hour.

Deployment Efficiency

AI agents drastically cut deployment times, reducing them from hours to mere minutes. Troubleshooting tasks that would take human support 40 minutes can now be completed in about one minute. The cost savings are equally impressive - a 10-minute human-led support session costs around $3.00, while an AI agent can handle the same task for just a penny, thanks to token-based pricing.

Error Reduction

To prevent issues from reaching production, AI agents run automated validation checks. These checks are thorough and align with the validation processes discussed earlier.

"Automation is deterministic. It only does exactly what you tell it to do... We are graduating from Generative AI, which writes the script for you, to Agentic AI, which runs the script, sees the error, analyzes the logs, debugs the root cause, and applies the fix."

– Aditya Kashyap, Solutions Architect, Harness

Integration with Existing Cloud Systems

AI agents also integrate seamlessly with current cloud workflows. They work across various interfaces, such as SDKs, CLIs, and Infrastructure-as-Code platforms like Terraform or CDK, as well as web-based portals. Unlike static automation, which relies on predefined rules, agentic AI makes real-time decisions about resource provisioning, de-provisioning, and workload distribution across availability zones or even different cloud providers.

For instance, in February 2026, SS&C Global Investor and Distribution Solutions implemented the SS&C AI Gateway with their Chorus orchestration layer. This integration allowed AI agents to manage customer communications, tripling response speeds and saving an impressive 886,000 hours annually.

To ensure accuracy, AI agents rely on centralized metrics, logs, and traces. Initially, it's recommended to deploy these agents in supervised mode, allowing humans to review their suggestions before granting full autonomy.

4. Validation Checks

Validation checks are a cornerstone of any efficient deployment process, ensuring that systems maintain their performance and reliability. Once provisioning is complete, AI agents carry out layered validation processes - ranging from syntax checks to live behavior testing - to guarantee deployments that are secure, compliant, and dependable.

Automation Capabilities

AI agents bring an added layer of precision to validation through multi-tool processes . They employ iterative reasoning loops to generate code, validate it against enterprise standards, and resolve any issues until all checks are cleared.

For example, in October 2025, Anoop Kumar, a Lead DevOps Consultant at Rackspace Technology, created a Terraform AI Agent specifically for automating Azure infrastructure deployments. This agent performs three essential validation steps: formatting, syntax checks, and security scans. By verifying infrastructure before deployment, it eliminated potential errors like hallucinations and resource duplication, cutting deployment times from hours to just minutes.

Advanced systems like Kanu take automation even further, running over 250 checks on deployed systems. These checks include verifying behavior through real endpoints and data paths. Some agents act as security gatekeepers, automatically approving, rejecting, or flagging pull requests based on vulnerability levels. For instance, they can reject a pull request if an Application Load Balancer lacks an HTTPS listener.

This thorough validation process plays a crucial role in minimizing production incidents and ensuring smooth operations.

Error Reduction

In December 2025, DuploCloud introduced six specialized AI DevOps Engineers across 34 organizations spanning healthcare, SaaS, and government sectors. These agents, equipped to correlate logs and metrics, reduced the time needed to resolve Kubernetes incidents from 45 minutes manually to just 5 minutes through an automated process requiring only a single approval click. Organizations leveraging AI-driven validation have reported up to an 80% drop in production incidents.

"AI automation must not compromise on efficiency, reliability, and scalability of cloud operations. An agentic design needs to go beyond simply prompting an LLM model and hoping for the best; rather, various guardrails and a combination of neural and symbolic steps are needed for high assurance." – Zhenning Yang et al., University of Michigan

These validation processes not only reduce errors but also integrate effortlessly into existing development workflows, enhancing operational efficiency.

Integration with Existing Cloud Systems

AI agents are designed to fit seamlessly into CI/CD pipelines, functioning as mandatory gates that embed compliance into the workflow. They work hand-in-hand with infrastructure-as-code tools like Terraform, Pulumi, and CloudFormation, automating the generation, validation, and reconciliation of configurations. Additionally, integration with policy-as-code frameworks such as Open Policy Agent and HashiCorp Sentinel enables real-time enforcement of standards for security, cost management, and resource tagging .

These agents also leverage request-level isolation and sandbox environments to run high-fidelity tests without duplicating entire cloud stacks. One enterprise reported saving $4 million annually by transitioning from full-stack duplication to request-level sandboxing. Furthermore, they monitor live signals - including logs, metrics, and traces - to diagnose failures in real time. The results are then fed back into the deployment cycle, enabling autonomous remediation .

5. Log and Metric Diagnostics

When a deployment goes live, AI agents take over with continuous monitoring, keeping an eye on logs and metrics to catch potential issues before they snowball into major outages. Unlike older monitoring systems that depend on fixed thresholds, these AI agents use statistical learning to establish baselines and flag unusual behavior automatically. This shifts incident response from a reactive scramble to a proactive, data-driven approach, seamlessly leading into automated diagnostics.

Automation Capabilities

The moment an alert is triggered, AI agents kick off root cause analysis by pulling relevant logs, metrics, and traces. They connect the dots between different signals - like linking a spike in latency to a specific log exception or tracing a failed request path - to create a detailed timeline of the issue.

Take this example: an engineer used Kanu to fix a broken Nginx service in a Kubernetes cluster. The AI agent identified a manual selector mismatch causing pod connectivity problems, applied a configuration patch, and confirmed the fix - all through simple natural language prompts.

Deployment Efficiency

These diagnostic features also speed up incident response. For instance, a team managing 35 microservices on Azure Kubernetes Service cut their response time from 4–8 hours to just 45 minutes thanks to automated root cause analysis. JP Morgan Chase saw a 38% drop in critical incidents and halved their mean time to resolution using their agentic platform. During Black Friday 2022, Shopify relied on an AI system for automated provisioning and monitoring that handled a 400% traffic surge without any manual intervention, all while maintaining 99.99% uptime.

By integrating AI-driven diagnostics, teams can resolve incidents in minutes instead of hours, using natural language queries to replace time-consuming documentation searches.

Error Reduction

AI diagnostics also cut down on alert noise by filtering out redundant notifications and grouping similar anomalies. This allows teams to zero in on critical issues instead of wasting time on false positives. By incorporating machine learning to detect and fix infrastructure drift, system downtime can drop by an average of 43%. Predictive scaling powered by AI has also shown cost savings of 25–30% compared to traditional auto-scaling methods. Additionally, mean time to detect issues can plummet from 6.5 hours to just 30 seconds when AI steps in early. For one enterprise using microservices, monthly production incidents were reduced by 70%. AI agents also analyze historical patterns to flag potential bottlenecks or configuration problems before they cause any disruptions, transitioning operations from reactive fixes to predictive maintenance. These advanced diagnostic methods form the backbone of a self-healing infrastructure that complements earlier automation efforts.

Integration with Existing Cloud Systems

Kanu’s diagnostic insights integrate smoothly with popular observability tools like Amazon CloudWatch, Datadog, New Relic, Splunk, and Dynatrace. It operates as a single-tenant service within the user’s existing cloud account or Kubernetes cluster, adhering to established security, networking, and identity controls. Integration often uses the Model Context Protocol, enabling secure communication with external monitoring systems. Kanu also connects to alerting platforms like PagerDuty and Slack via webhooks, launching diagnostic sessions as soon as incidents occur.

Unlike static AI models, Kanu processes high-cardinality logs, metrics, and traces to pinpoint failures rather than relying solely on code analysis. If deployment validation fails, Kanu reads logs, updates infrastructure code, and triggers a redeployment - all without manual intervention. This seamless integration and automation make it a powerful tool for maintaining system reliability.

6. Error Resolution and Redeployment

When an error pops up, AI agents don’t just sound the alarm - they step in to resolve it and redeploy updated configurations. This process builds on earlier automated provisioning and validation steps, shifting cloud operations from reactive problem-solving to proactive, self-healing systems. By automating recovery, these agents drastically reduce downtime and limit the need for human intervention.

Automation Capabilities

AI agents rely on a three-tiered framework - Detection, Reasoning, and Action - to monitor logs, identify issues, and implement fixes like rollbacks or restarting pods. Instead of following rigid if-then scripts, they use a dynamic “Cognitive Loop” to observe, plan, and act.

"Automation is deterministic. It only does exactly what you tell it to do... We are now graduating from Generative AI, which writes the script for you, to Agentic AI, which runs the script, sees the error, analyzes the logs, debugs the root cause, and applies the fix."

- Aditya Kashyap, Solutions Architect, Harness

These agents can analyze error messages and stack traces against millions of historical incidents, pinpointing root causes in seconds. Some systems employ a multi-agent model: a Diagnoser to detect the failure, a Validator to confirm the safety of fixes, and a Remediator to implement the solution. This approach completes the loop from identifying the problem to applying automated fixes.

Deployment Efficiency

Automating error resolution significantly speeds up recovery. For instance, in October 2025, a SaaS platform running 35 microservices on Azure Kubernetes Service reduced deployment failures by 67% and cut incident response times from 4–8 hours to just 45 minutes over four months. This improvement saved $420,000 annually by reducing engineering workload and minimizing the impact of incidents. Additionally, AI-driven root cause analysis slashes investigation time from hours - often 3–10 hours - to as little as 15 seconds. This efficiency helps close the gap caused by manual log analysis.

Error Reduction

AI predictive models assess deployment risks by analyzing factors like change size and timing. High-risk deployments can be blocked before reaching production, preventing potential failures. These models also catch silent issues, such as configuration drift, that might otherwise go unnoticed.

For best results, structured JSON logging is recommended. This format allows precise parsing of events and captures key details like project IDs, commit SHAs, and environment states (e.g., Node or Ruby versions, memory availability, disk space). Clear error handling that distinguishes between expected and actual outcomes further enables agents to propose targeted fixes.

Integration with Existing Cloud Systems

AI agents seamlessly integrate into current DevOps workflows, ensuring secure and compliant fixes. They operate as single-tenant services within a company’s VPC or Kubernetes cluster, maintaining compatibility with existing networking, identity, and security protocols. These agents work with popular DevOps tools like Terraform, Kubernetes, GitHub Actions, and cloud-native monitoring platforms to streamline operations across environments.

Many AI systems also include a "Save & Deploy" or "Approve" step for human oversight on high-impact changes. For example, Kanu operates within pre-defined boundaries and adheres to SOC 2 Type II compliance, ensuring all automated fixes align with the same security standards as manual changes. By integrating directly into pull request workflows, these agents help identify security risks and potential cost increases before code is merged.

7. Pull Request Generation and Audit Trails

After resolving errors automatically, AI agents complete the deployment process by creating production-ready pull requests. These pull requests are only generated once infrastructure changes have been thoroughly validated in a test environment. Tools like Kanu ensure no pull request is issued until the system is confirmed to operate reliably in your setup. This approach eliminates the risky practice of submitting untested code, with the AI agent acting as the final checkpoint, turning validated changes into production-ready commits - complete with detailed documentation. By aligning this step with previous automation and validation efforts, the entire deployment process becomes both efficient and reliable.

Automation Capabilities

Modern AI agents handle pull request creation through event-driven workflows. For example, when a CI pipeline fails, an AutoFix agent reviews the build logs, generates a fix, ensures the build passes, and then automatically submits a pull request with the correction. Infrastructure-as-Code agents can take plain language prompts, convert them into Terraform code, validate the code using tools like terraform fmt and tflint, and trigger pull requests via GitHub Actions. These agents can also respond to triggers like security vulnerability alerts by implementing fixes and submitting pull requests for human review, all without requiring manual input.

Deployment Efficiency

Automating pull request creation significantly cuts down the time spent on infrastructure reviews. Some organizations report reducing review time from 8 hours per week to just 45 minutes, thanks to AI-driven reviews. These agents provide structured feedback, including details like severity levels (e.g., CRITICAL, HIGH), cost impact estimates, and precise line-number references, which make human oversight faster and more effective. For routine tasks like dependency updates or multi-file refactoring, these agents handle everything - from detecting issues to submitting pull requests - streamlining the process further. This speed and efficiency reinforce the role of AI agents in accelerating the deployment cycle.

Integration with Existing Cloud Systems

AI agents connect effortlessly with version control systems through native Git integrations, webhooks, and APIs. They support platforms like GitHub, GitLab, and Bitbucket using YAML configurations (e.g., .github/workflows/pr-agent.yml) and tokens such as GITHUB_TOKEN. Every automated change is meticulously tracked in the commit history and pull request comments, creating a complete audit trail that records what actions were taken, when, and why. Operating within defined boundaries, Kanu ensures SOC 2 Type II compliance, guaranteeing that all automated changes meet the same security standards as manual code reviews.

Comparison Table

Here's a quick-reference table summarizing the core features and advantages of each AI-driven automation method discussed earlier.

Each method offers distinct benefits tailored to specific deployment needs. For example, Intent Capture leverages natural language processing to turn business requirements into actionable technical specifications, ensuring clarity and alignment with organizational goals. Code Generation automates the creation of Terraform, CDK, or CloudFormation templates while avoiding resource duplication by analyzing existing infrastructure. Resource Provisioning takes automation further by using traffic patterns, business calendars, and external data to make smarter autoscaling decisions.

Validation Checks run over 250 automated tests, including syntax validation, security linting with TFLint, and formatting checks, to catch issues before production. Diagnostics simplifies troubleshooting by quickly identifying root causes using synthesized logs and metrics, cutting down a process that often takes hours for human engineers. Error Resolution introduces self-healing capabilities to restart failing components and auto-correct code issues, ensuring systems stay functional and efficient. Lastly, PR Generation and Audit Trails maintain transparency and compliance by generating pull requests only after successful validation and keeping detailed logs for oversight.

| Automation Method | Key Features | Primary Benefits |

|---|---|---|

| 1. Intent Capture | Natural language analysis; clarifying questions; tradeoff evaluation | Provides clarity on requirements; aligns deployments with business objectives |

| 2. Code Generation | Generates Terraform/CDK/CloudFormation; checks existing infrastructure | Reduces manual coding errors; prevents resource duplication |

| 3. Resource Provisioning | Predictive autoscaling; idle resource detection; optimized instance selection | Saves costs (up to 40%); improves resource usage efficiency |

| 4. Validation Checks | Over 250 behavior tests; TFLint security linting; syntax validation | Ensures production readiness; addresses security issues proactively |

| 5. Diagnostics | Anomaly detection; RCA; log and metric synthesis | Identifies problems in seconds; reduces alert fatigue |

| 6. Error Resolution | Self-healing systems; auto code fixes; iterative retries | Minimizes downtime; reduces manual troubleshooting |

| 7. PR & Audit Trails | Automatic PR creation; detailed logs; version control integration | Enhances compliance; ensures visibility and human oversight |

This table helps teams quickly pinpoint the right methods to address their specific deployment challenges, starting with simpler tools like intent capture and code generation before advancing to validation and diagnostics as confidence builds.

Conclusion

AI agents are transforming cloud deployment by replacing rigid, error-prone scripts with intelligent systems that can analyze logs, identify root causes, and resolve issues independently. This evolution from "Human-in-the-loop" to "Human-on-the-loop" allows engineers to focus on defining policies and goals, leaving the execution to AI-driven solutions.

The seven methods discussed - from intent capture to pull request generation - deliver clear benefits. AI agents speed up delivery, significantly lower costs, and improve system reliability through continuous validation and self-healing features. Instead of replacing DevOps engineers, these agents enhance their roles by automating repetitive and context-heavy tasks that often delay deployments.

A good starting point is to deploy read-only agents for tasks like infrastructure mapping and diagnostics. This builds trust before granting them autonomy. To ensure safety, implement Policy-as-Code guardrails with tools like Open Policy Agent to block high-risk commands, regardless of the AI's reasoning. In production, configure agents to require explicit human approval for any state-changing actions.

These practices lead to secure, efficient deployments. Kanu AI, for instance, operates as a single-tenant service within your cloud environment, ensuring data stays secure and complies with SOC 2 Type II standards. Over time, the platform adapts to your team's preferences, improving its recommendations and automating time-consuming tasks. As cloud systems grow increasingly complex, AI agents are becoming indispensable for maintaining speed, reliability, and efficiency.

FAQs

How do AI agents safely make changes in production?

AI agents help keep production changes safe by combining validation checks, security measures, and human oversight. They work within secure limits, interact only with approved services, and automate processes while still giving engineers the ability to review and approve changes. Key features like policy enforcement, monitoring tools, and rollback options make it easier to catch problems early, avoid misconfigurations, and ensure systems stay stable during deployments.

What access do AI agents need to my cloud accounts and CI/CD tools?

AI agents often require secure access to your cloud accounts and CI/CD tools to handle tasks such as deployment and resource management. This access is typically granted through methods like API keys, service accounts, or OAuth tokens.

When it comes to CI/CD pipelines, these agents need entry points to essential components like repositories, build servers, and deployment tools. To keep your environment secure and transparent, it’s crucial to implement role-based access controls (RBAC) and restrict permissions to only what’s necessary. This approach minimizes risks while ensuring the agents can perform their tasks effectively.

How do AI agents prevent bad Terraform changes and resource duplication?

AI agents play a key role in safeguarding Terraform deployments by catching potential issues before they become problems. They carefully validate Terraform plans for misconfigurations, security vulnerabilities, and compliance gaps prior to deployment. Beyond that, these agents compare proposed changes with the existing infrastructure, helping to prevent duplicate resources from being created.

This process ensures that your code is accurate, your infrastructure stays intact, and costly errors are avoided. By integrating these intelligent checks, cloud deployment workflows become smoother, while unnecessary risks and expenses are kept in check.