AI Agents for Real-Time Validation: How They Work

AI agents are reshaping how software teams ensure reliability by automating real-time validation tasks like monitoring, testing, and fixing issues during deployments. These agents streamline workflows, reduce manual effort, and improve system dependability. Here's a quick breakdown of the key points:

- What They Do: AI agents monitor live systems, analyze logs, identify failures, suggest fixes, and retry deployments until success.

- How They Work: They use a loop of observing, thinking, acting, and reflecting to validate systems dynamically.

- Impact: Teams using AI validation have cut pull request cycle times by 87% and reduced bugs by 40%.

- Example: Kanu AI's QA Agent performs over 250 checks on live systems, ensuring deployments meet requirements and operate correctly.

These agents not only save time but also enhance reliability by addressing issues as they arise in live environments.

Stop guessing and start testing your AI agents with ADK

sbb-itb-3b7b063

How Kanu AI's QA Agent Performs Real-Time Validation

Kanu's QA Agent plays a critical role in automating the delivery process by acting as the final checkpoint before code moves to human review. Unlike traditional testing tools that focus on static code, this agent validates live systems by interacting directly with active endpoints and data paths in your cloud environment. It uses live logs, metrics, and traces to assess actual deployment performance, going beyond what static analysis can reveal.

This validation process is designed to be iterative. When the QA Agent detects a failure, it dives into the logs to identify the root cause, shares this information with the DevOps Agent, and triggers an automatic retry with updated code or infrastructure. This loop continues until all checks are cleared, ensuring the system is ready for production use.

Key Features of Kanu's QA Agent

The QA Agent performs over 250 automated validation checks on live systems, ensuring they meet expectations under real-world conditions. It even generates its own automated checks based on requirements defined by the Intent Agent, significantly reducing manual effort for engineering teams.

What sets the QA Agent apart is its reliance on real-time observability. Instead of static code analysis, it examines runtime signals like CloudWatch logs, application metrics, and distributed traces. This allows it to catch issues that only surface in live environments, such as misconfigured security groups or incorrect IAM permissions. These capabilities integrate seamlessly with other agents for a smooth and unified delivery process.

Integration with Intent and DevOps Agents

The QA Agent works as part of a three-agent system that handles the entire delivery workflow. The Intent Agent captures requirements and system trade-offs, creating a specification that defines success. The DevOps Agent takes these specifications, generates both application code and infrastructure-as-code (using tools like Terraform, CDK, or CloudFormation), and deploys them to your AWS account.

Once deployment is complete, the QA Agent steps in to ensure the live environment matches the original intent. For example, if an API endpoint returns a 500 error or a Lambda function times out, the QA Agent identifies whether the issue lies in the application code or infrastructure configuration. It then communicates this diagnosis to the DevOps Agent for adjustments. This iterative process continues until the system operates as expected.

Benefits of Using Kanu for Validation

Kanu's automated validation process accelerates delivery by up to 80%, taking projects from requirements to production faster. By automating live deployment monitoring and error resolution, the QA Agent frees up engineers to focus on building new features.

Security and compliance are baked into the system. The QA Agent operates as a single-tenant service within your VPC, adhering to strict permissions and network boundaries. With no default data egress and SOC 2 Type II compliance, all validation happens securely within your controlled environment, ensuring that sensitive production data and logs remain private.

Steps in Real-Time Validation Using AI Agents

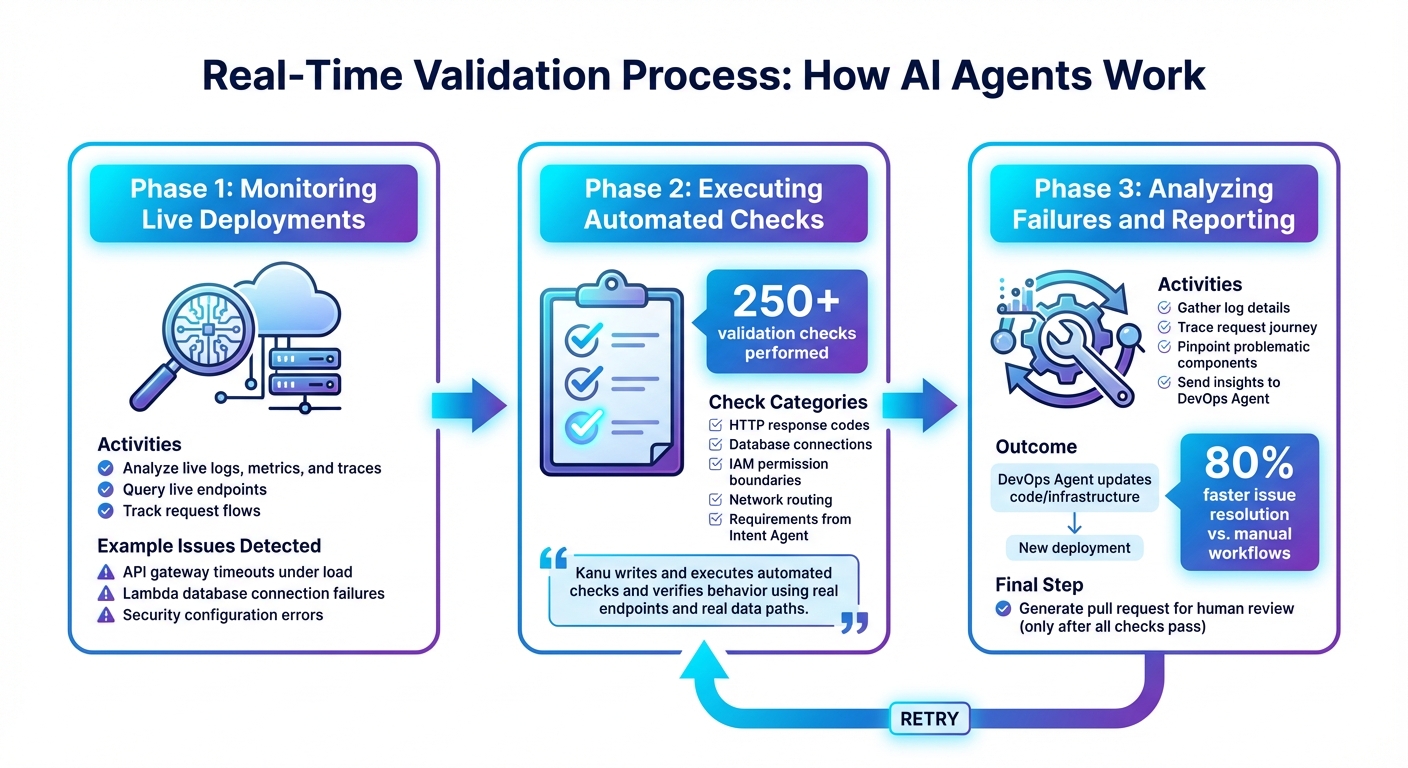

AI Agent Real-Time Validation Workflow: 3-Phase Process

Kanu's validation workflow integrates real-time checks immediately after deployment. This process unfolds in three key phases, ensuring systems are ready for production under real-world conditions.

Monitoring Live Deployments

The QA Agent keeps a close eye on the deployed system by analyzing live logs, metrics, and traces. It actively queries live endpoints to track how requests flow, catching issues that only surface when components interact in a live setting. For instance, it can spot scenarios like an API gateway timing out under heavy load or a Lambda function failing to connect to a database due to incorrect security configurations. Once it captures a snapshot of real-time performance, the QA Agent moves on to run more detailed validation checks.

Executing Automated Checks

The QA Agent performs over 250 validation checks on live endpoints. These checks cover a wide range of elements, such as HTTP response codes, database connections, IAM permission boundaries, and network routing. Additionally, the agent incorporates requirements defined by the Intent Agent, ensuring that validation aligns with the system's goals. This hybrid approach blends straightforward checks with intelligent evaluations.

"Kanu writes and executes automated checks and verifies behavior using real endpoints and real data paths."

If any checks fail, the agent quickly transitions into diagnostic mode to address the issues.

Analyzing Failures and Reporting Insights

When a validation check fails, the QA Agent dives into the failure's context by gathering log details, tracing the request's journey, and pinpointing the problematic component using real-time environment data. This information is then handed over to the DevOps Agent, which updates the application code or infrastructure templates and initiates a new deployment. This automated process can speed up issue resolution by as much as 80% compared to manual workflows. The cycle repeats until all checks are successful. At that point, Kanu generates a pull request for human review, allowing engineers to focus on reviewing functional systems instead of troubleshooting broken ones.

Embedding AI Agents in CI/CD Pipelines

AI agents are now seamlessly embedding into CI/CD pipelines, making deployments smoother and more efficient. Tools like Kanu's QA Agent integrate directly into existing workflows without requiring teams to overhaul their pipelines. These agents operate within your cloud account using a single-tenant deployment model, ensuring that your existing identity, networking, and security protocols remain intact and data stays secure.

These agents leverage a pull request (PR)-based workflow to protect production environments. They validate code in a development environment as it's generated and deployed. A PR is only created for human review after all automated checks are completed successfully. As Atulpriya Sharma, Sr. Developer Advocate at Testkube, aptly states:

"The bottleneck isn't code generation anymore - it's the validation maze".

Comparison of Manual vs. AI-Driven Validation

AI-driven validation brings significant improvements over manual methods in terms of speed, test coverage, and scalability:

| Feature | Manual/Traditional Validation | AI-Driven Validation |

|---|---|---|

| Speed | 10–35+ minutes per validation cycle | Under 2 minutes for root cause analysis |

| Coverage | Limited; writing new tests is slow | 10× faster test creation with continuous updates |

| Scalability | Struggles with 50+ commits per week | Easily handles 200–300+ commits per week via parallelization |

| Maintenance | Consumes 30–40% of QA resources | Reduces maintenance by 85% |

| Context | Manual correlation across tools | Automated analysis of logs, traces, and code |

Teams adopting AI-powered testing platforms report an 85% drop in test maintenance efforts and a 10× boost in test creation speed. For example, in early 2026, PostHog's platform engineering team utilized an AI agent to manage a CI environment handling 575,000 jobs weekly. The agent scanned up to 940 million rows of log data to detect regressions, demonstrating the scalability and precision of AI-driven validation.

This level of efficiency ensures AI agents fit naturally into existing development ecosystems.

Integration with Existing Tools

AI agents integrate effortlessly with tools like GitHub, GitLab, Slack, Jira, and Kubernetes via the Model Context Protocol (MCP). This approach enables agents to pull data directly from repositories, share findings in Slack, and create actionable issues in Jira - all while working within your team's existing interfaces. For instance, Kanu generates pull requests that align with your current code review workflows in GitHub or GitLab.

To maintain security, these integrations use a layered permissions model. Agents start with read-only access and require explicit human approval for sensitive actions, such as merging code or rotating secrets. This setup allows organizations to embrace AI-driven validation without compromising their established workflows or security protocols.

Best Practices for Using AI Agents

Building on Kanu's real-time validation, these practices tackle integration hurdles and scalability needs for deploying AI agents effectively.

Overcoming Common Integration Challenges

Integrating AI agents can be tricky. One major issue is non-determinism, where outputs can be inconsistent or unpredictable, making it tough to ensure reliable results in production. As Viqus.ai aptly states:

"The most dangerous agent failures are the ones that look like successes. The output is coherent, well-formatted, and completely wrong."

Other challenges include upstream API rate limits or incomplete data, which can cause infinite retry loops or silent failures. Kanu addresses these issues by using real-time signals from logs, metrics, and traces to pinpoint root causes. Additionally, it employs extensive checks to catch configuration mismatches before they disrupt production.

To enhance reliability, consider using deterministic scaffolding. This involves employing a state machine or workflow engine to manage the overall process while delegating specific decision-making tasks to the language model. This approach helps mitigate the reliability ceiling, as most architectures currently achieve task completion rates of only 85–90% on complex workflows. Another key practice is enforcing strict tool interfaces through JSON Schema or Zod, validating all inputs and outputs to prevent malformed data from causing issues.

By following these structured methods, you can build AI agents that are more reliable and better suited for production environments.

Ensuring Scalability and Compliance

To scale AI agents effectively, treat them as distributed systems. Use strategies like hard token budgets, step limits, and model routing to balance complex reasoning tasks with simpler ones efficiently.

For compliance, adopt a policy-as-code framework with machine-readable rules. Kanu’s single-tenant architecture, for example, operates securely within your AWS VPC and adheres to SOC 2 Type II standards. For critical actions, such as production deployments or data deletions, require human approval to prevent automation errors. Additionally, version-control prompts, evaluation datasets, and agent policies in Git to track and manage performance changes over time.

These strategies ensure AI agents remain scalable, secure, and compliant, while reinforcing the value of real-time validation in maintaining reliable production systems.

Conclusion

AI agents are transforming the path from idea to deployment. The real challenge in software delivery isn’t just writing the code - it’s ensuring that the code functions correctly in real-world environments. Kanu AI’s QA Agent tackles this head-on by performing extensive live-system validations, analyzing logs, and iterating until the code is ready for production use.

With this approach, organizations can deliver results 80% faster. This acceleration comes from automating the entire delivery cycle. When failures occur, the agent identifies the issue, resolves it, and retries - removing the need for manual log reviews and speeding up the process significantly.

Kanu AI’s integration of AI agents creates a streamlined validation workflow. Its single-tenant design operates securely within your AWS VPC, adhering to SOC 2 Type II compliance and respecting your existing security protocols. The multi-agent system - including the Intent, DevOps, and QA agents - ensures that code is not only generated efficiently but also validated rigorously before any pull request reaches human review.

This shift from manual validation to AI-driven processes isn’t just about speed; it’s about delivering consistent and reliable results. By compressing pull request cycle times by 87% and reducing bug escape rates by 40%, these agents enable a more predictable and dependable software delivery process. Combining AI’s efficiency with strict validation criteria ensures every step meets the highest standards.

FAQs

How do AI agents validate a live deployment in real time?

AI agents keep an eye on live deployments by using continuous monitoring and feedback loops. They follow workflows from start to finish, evaluating sessions based on metrics such as accuracy, efficiency, and robustness. These evaluations help identify potential issues early, acting as safeguards to block regressions before they become a problem. By analyzing logs, metrics, and user interactions, the validation process is constantly refined, ensuring the agent's behavior stays consistent and meets expectations.

What happens when a validation check fails during deployment?

When a validation check fails during deployment, the system catches the issue immediately, stopping it from impacting production. It pinpoints various failure types and manages them through layered controls built into the agent workflow, ensuring effective handling of potential errors.

How do AI validation agents stay secure and compliant inside my AWS VPC?

AI validation agents operate securely within your AWS VPC by adhering to strict organizational security standards. They handle tasks like automated security reviews and advanced penetration testing, ensuring compliance and robust protection. These agents work throughout the development lifecycle, validating security from initial design to final deployment. This proactive approach helps catch and address vulnerabilities early, reducing risks before they become critical issues.